research

Our research spans six key areas in AI security.

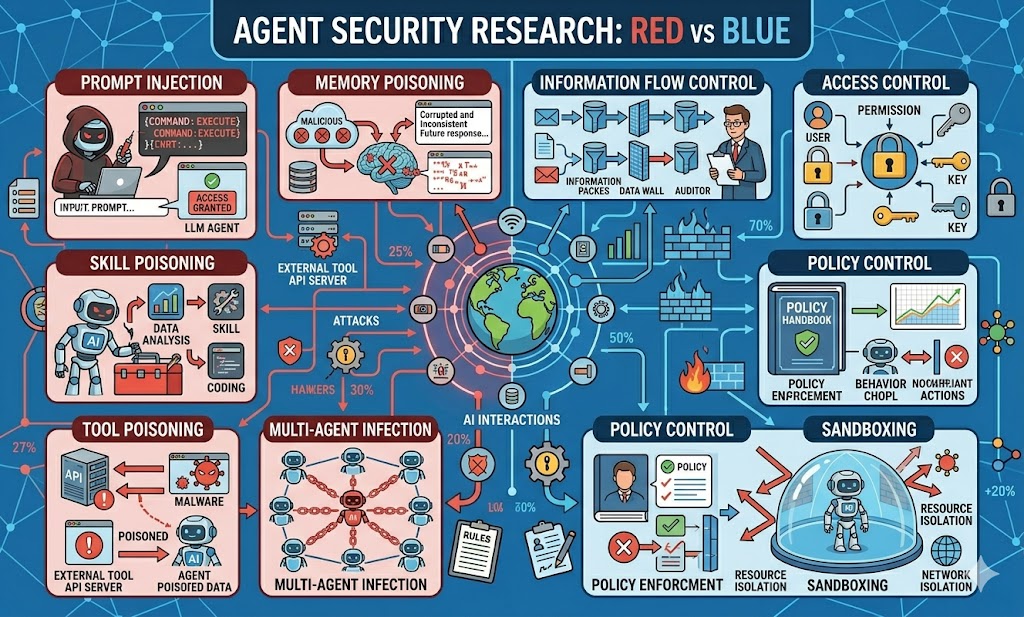

AI Agent Security

Securing autonomous AI agents against adversarial manipulation, prompt injection, and unintended behaviors in real-world deployments.

- [Arxiv-2026] PI-SoK: The Landscape of Prompt Injection Threats in LLM Agents

- [Arxiv-2025] AgentArmor: Enforcing Program Analysis on Agent Runtime Trace to Defend Against Prompt Injection

- [Arxiv-2024] PIB-Bench: Prompt Injection Benchmark for Foundation Model Integrated Systems

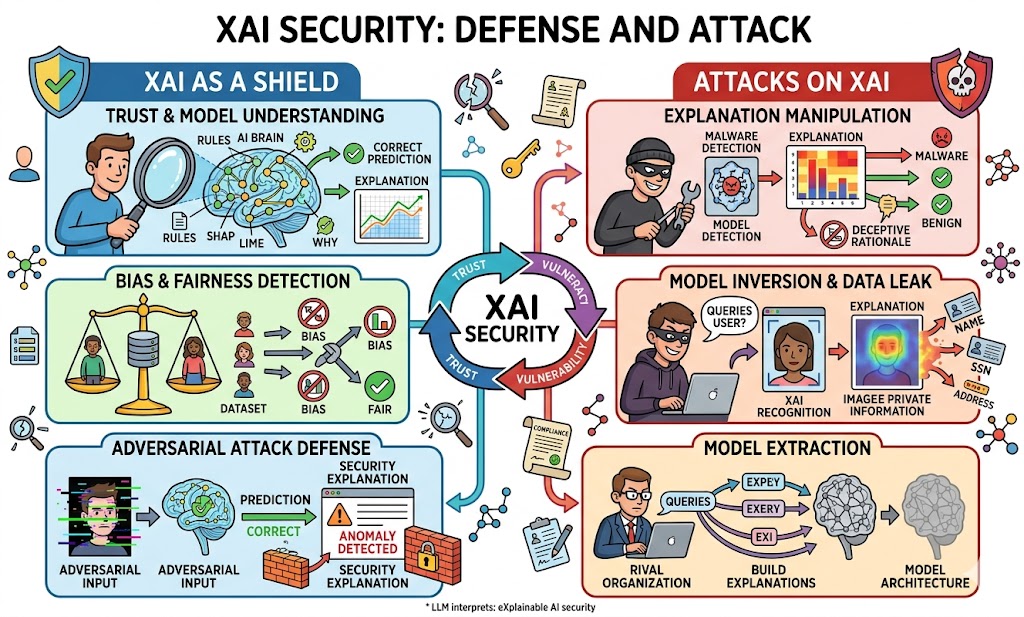

Interpretable AI Security

Leveraging interpretability and explainability techniques to understand, diagnose, and mitigate vulnerabilities in AI systems.

- [Arxiv-2025] HaluProbe: What Are Models Thinking About? Understanding LLM Hallucinations Through Model Inner State Analysis

- [Arxiv-2025] Halu2Jail: From Hallucinations to Jailbreaks: Rethinking the Vulnerability of Large Foundation Models

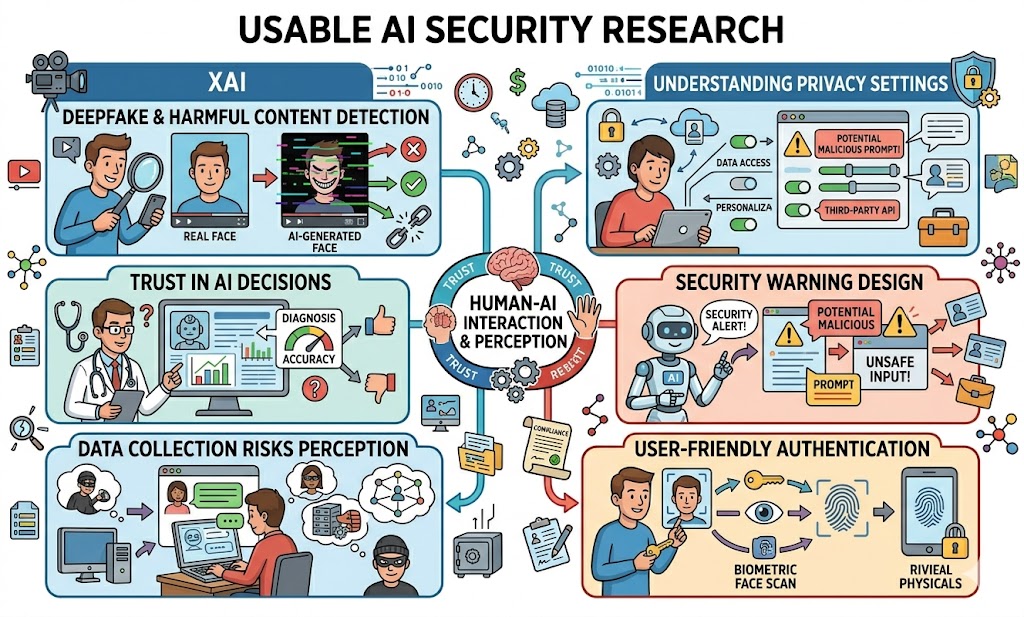

Usable Security of AI

Designing intuitive security mechanisms and interfaces that help users safely interact with and configure AI systems.

- [CCS-2024] Moderator: Moderating Text-to-Image Diffusion Models through Fine-grained Context-based Policies

- [NAACL-2025] RePD: Defending Jailbreak Attacks Through a Retrieval-based Prompt Decomposition Process

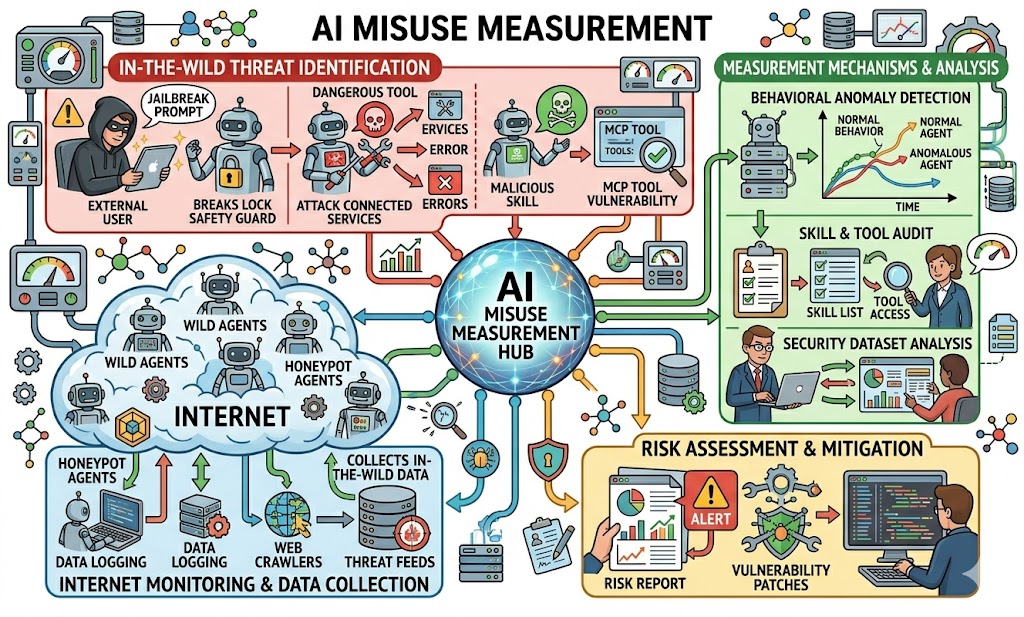

AI Misuse Measurement

Understanding and measuring how AI is being misused in the wild, from MCP server poisoning to adversarial exploitation of AI-powered services, and developing methods to quantify such misuse at scale.

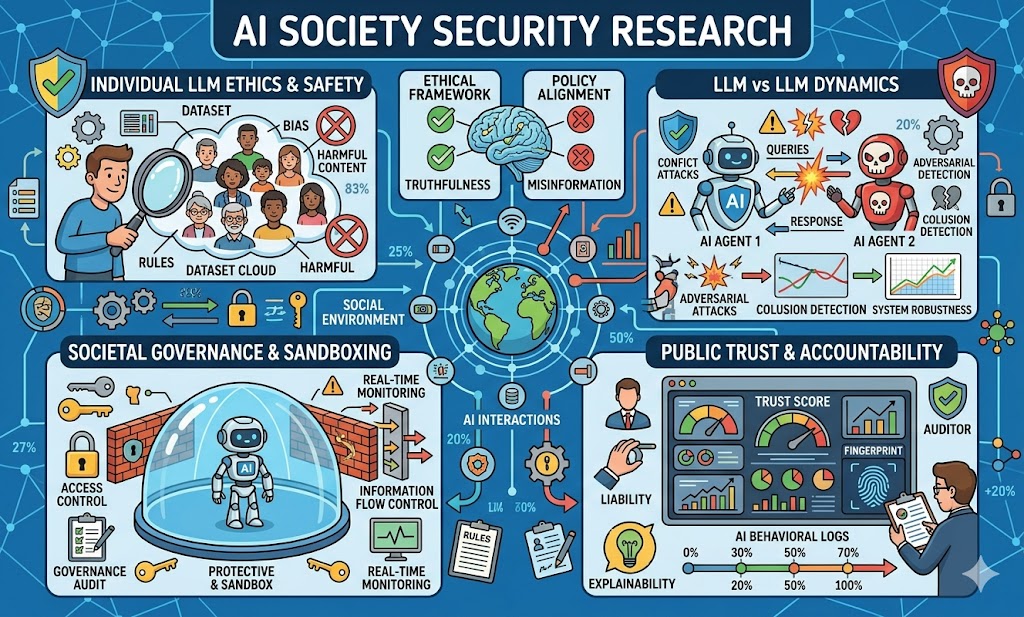

AI Society Security

Studying the safety implications of deploying AI as participants in social networks and human-like environments, including how AI handles moral dilemmas, social norms, and trust dynamics when acting as autonomous social agents.

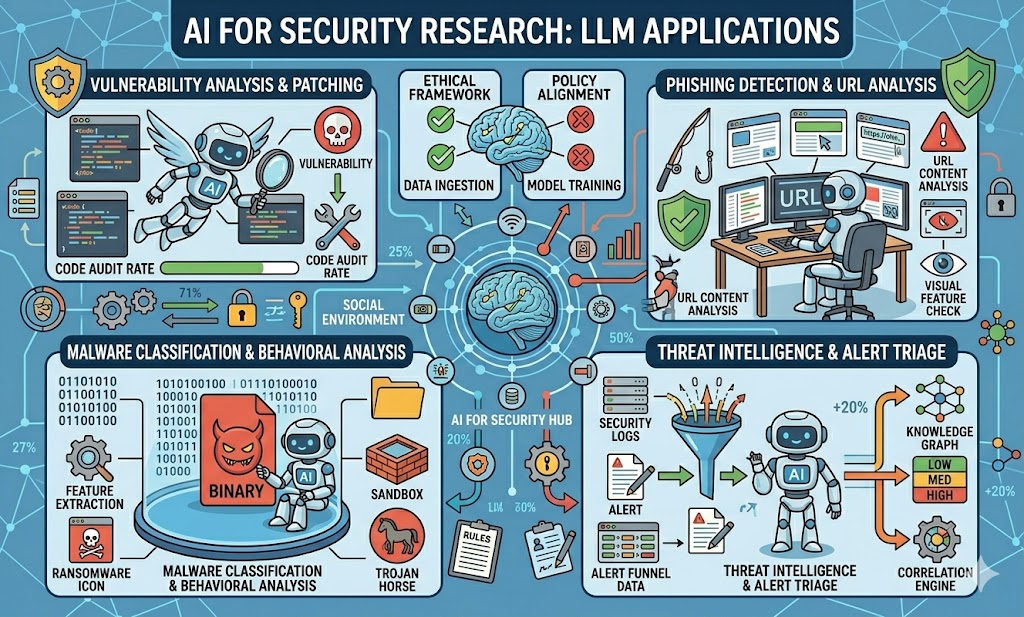

AI for Security

Applying AI techniques to strengthen cybersecurity defenses, including automated vulnerability repair, threat detection, and security analysis.